Today we look at a twist in the road. Not so much about Tableau, but rather the options for bringing data into Tableau. Let me tell you a story - A few months back I was very fortunate to be at the right place at the right time. I had contacted a slick data scraping company in the UK, called ScraperWiki about getting a complete list of Twitter followers for a client. While I was emailing them I threw in to my last line something like "BTW - you guys should figure out how to directly connect the data into Tableau". I love startups because people actually read emails. It wasn't more than a day or two before I received an email back stating they were actually in development of a Tableau connector, but were interested if I had an opinion on what it should look like. I lack many things, but an option is almost never one of them....

Long story short, after a couple Skype Calls (UK-Atlanta-San Fran-Seattle) the world now has an OData connector that feeds data out of ScraperWiki (including Twitter data). Ta-da! On behalf of Ian and his stud team across the pond, you're welcome :) So, today we'll show just how simple the whole thing is - I give you Tweets, ScraperWiki and OData.

So again, I'm not starting in Tableau on this one. I'll be heading straight to www.ScraperWiki.com, and creating an account to scrape Twitter based on keywords. Given the team effort of yesterday, I'm going to look at Tweets with @Slalom and we'll put those together in a quick dashboard. I select Search for Tweets and I'm asked for what I want to search for:

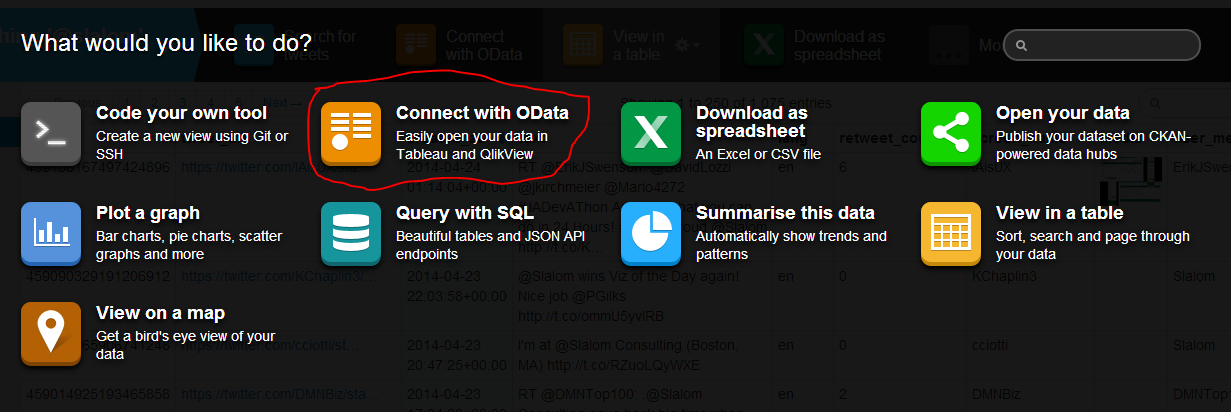

You'll be asked to authorize the app and you'll have to put in a Twitter Account and Password. And ScraperWiki is off to the races! Give it a few minutes to a few hours and it will go back up to two weeks to get tweets matching your criteria. You can also set it to continue to capture matching tweets going forward. Now, for the coolest part - You can now make this connectable via OData (per that fun story at the beginning). Disclaimer - This option is only available to "Premium Level" customers - ie it's $29/month. We're cool and have an account at this level, so when I click on the @Slalom Tweet data set I made, I see this option:

Once I do that I'm given a URL right here:

I copy that URL and pop open Tableau, select the OData connection and paste in the URL:

There's no user name or password (because remember - OData is the only type of dynamic data that can be used on Tableau Public - that's why!). Click OK and Tableau sucks the data in and creates an extract. To get the new data from the web just refresh the data source at any time.

So below is the visualization I made, with the Live OData connection! As you can see we have tweets in there as recent as yesterday's (4/23) Viz of the Day win by Peter Gilks! Congrats Peter!

I hope you enjoyed that one. I'd encourage you to think about other ways you might be able to leverage the ScraperWiki tool. If you're already doing so, drop me a line as I'd love to hear about it. Many thanks!

Nelson

Thanks for the great writeup!

ReplyDeleteOur pricing model has moved on a bit, we're now offering the OData connector on our free 30 day trial, as well as our two paid account levels at $9 and $29 per month.

I'm using the Odata connector regularly, as much to avoid managing downloaded files, as to get live data. I've used to to get stock data from the Yahoo!Finance API. And we're working on getting our Table Xtract tool, which is a general table scraper, to serve up data in the right format for it.

We're always keen to hear from people as to what data, and functionality,l they want from our platform.

This comment has been removed by the author.

Deletenice blog , very helpful and visit us for VISUALIZATION SERVICES in UK

ReplyDeleteI read testimonies and reviews about him so I contacted him immediately, explained my problems to him. Same day , he casted a spell for me and assured me for 2 days that my husband will return to me and to my greatest surprise the third day my husband came knocking on my door and begged for forgiveness. I am so happy that my love is back again and not only that, we are about to get married again, he proposed. I wouldn't stop talking about him. Contact him today if you need his help via email: emutemple@gmail.com and you will see that your problem will be solved without any delay. Website: https://emutemple.wordpress.com/ whatsapp number +2347012841542

ReplyDelete